Top 7 Ethical Challenges in Artificial Intelligence Today

You are enthusiastic about Artificial Intelligence (AI), see other organisations using it as a competitive advantage, and know it can provide time savings, decrease expense and enhance decision-making. However, you have an underlying anxiety. What if AI doesn’t work? What if your AI makes a biased judgement? What if your AI discloses private data? What if the reputation of your organisation is damaged because of your AI? These are some of the critical concerns related to Ethical Challenges in Artificial Intelligence. The number of organisations using Artificial Intelligence is increasing rapidly and therefore Ethical Challenges related to the use of Artificial Intelligence have moved from theoretical discussions to actual business risks. Through my work with organisations that are going through digital transformation, it is apparent that innovation without ethics creates risk. In this article, I will outline seven of the top Ethical Challenges in Artificial Intelligence, and what you should consider before fully adopting AI.

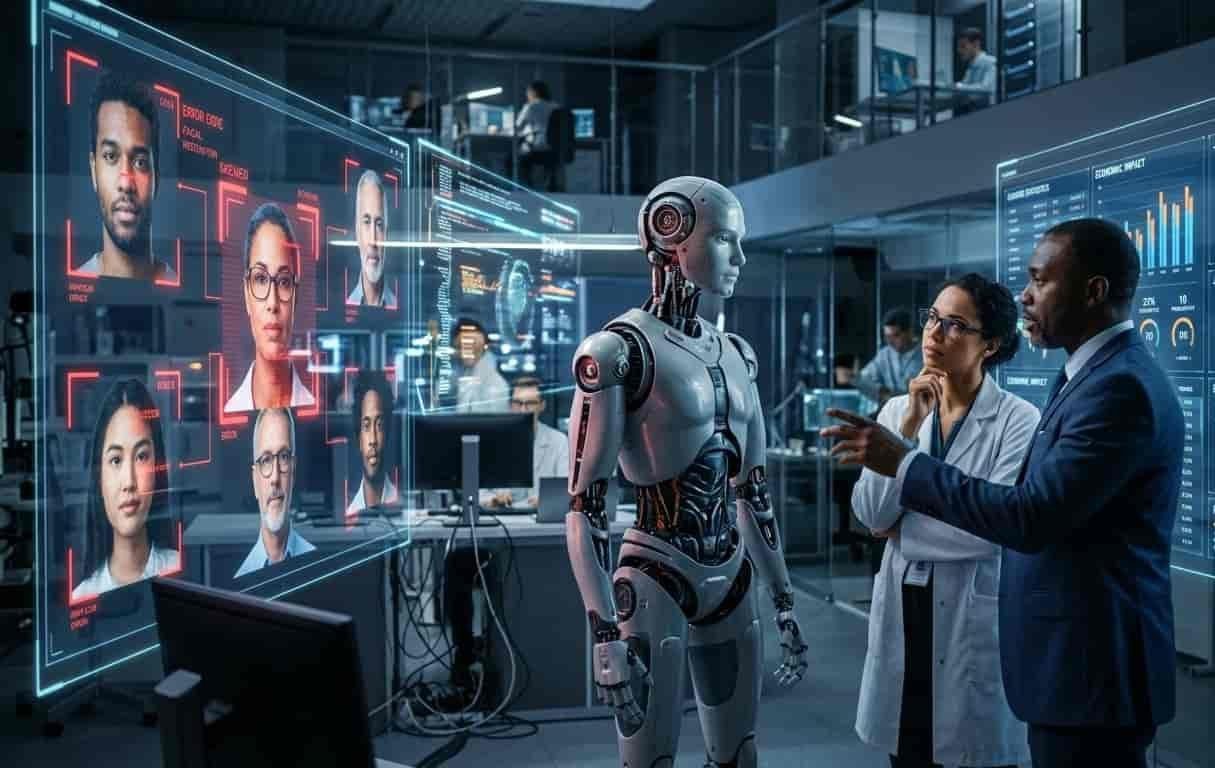

Biases and prejudices

The greatest challenge to ethics in AI is biases and prejudices. AI systems learn through data; when you use a historical data set that is biased based on human behaviour, the AI will reflect that same bias. It will not question that bias; instead it will magnify it. For example, hiring software has been biased toward certain demographic groups, and facial recognition software has demonstrated difficulty accurately presenting and identifying various ethnic groups.

These instances represent examples of ethical challenges that do not arise from malicious machines. They arise from faulty data and preconceived notions. In addition, if you use AI in hiring, lending, healthcare, and law enforcement without careful consideration of these biases, it could have legal implications as well as result in negative publicity for your company.

These instances represent examples of ethical challenges that do not arise from malicious machines. They arise from faulty data and preconceived notions. In addition, if you use AI in hiring, lending, healthcare, and law enforcement without careful consideration of these biases, it could have legal implications as well as result in negative publicity for your company.

Privacy of Data and Surveillance

Data Privacy and Surveillance is another major area of Ethical Challenges in Artificial Intelligence. AI systems rely on access to large quantities of personal data in order to operate effectively. User data fuels intelligent systems, both by analyzing browsing behavior or recording and analyzing voice recordings. But do users really know how much of their data is being collected and analyzed? The Ethical Challenges in Artificial Intelligence become greater when the providing of consent is unclear or hidden in long terms of service agreements. If you are transacting with AI-based applications or creating value from

AI-based activities, then you need to question whether your data practices are clear, fair, and compliant.

Lack of Transparency (The Black Box Issue)

The “black box” issue is one of the more intricate ethical dilemmas we face with AI. Some of today’s most sophisticated artificial intelligent programs don’t really know how they arrive at their conclusions, even the engineers who created the program/s do not always have a clear understanding. For example, when an AI model denies a person a mortgage or alerts a doctor of a potential health risk, is there any way to receive a logical explanation for the rationale behind that decision? When we talk about the future of human beings, failing to provide an explanation (transparency) can also create serious risk.

The ethical issues surrounding artificial intelligence are generally about trust. When you remove trust from your customers, you have limited their willingness to accept things that are outside their frame of reference. The inability to provide an explanation of the AI’s rationale to the general population in a simple way will ultimately result in a loss of trust/credibility between you, as a vendor, and your customers, as well as with regulators.

The ethical issues surrounding artificial intelligence are generally about trust. When you remove trust from your customers, you have limited their willingness to accept things that are outside their frame of reference. The inability to provide an explanation of the AI’s rationale to the general population in a simple way will ultimately result in a loss of trust/credibility between you, as a vendor, and your customers, as well as with regulators.

Accountability and Responsibility

Another important Ethical Challenges in Artificial Intelligence is that of accountability. If an AI system has made a mistake, who is going to be responsible for it? Is this going to fall to the developer who built the AI algorithm, the company who deployed the AI product or the individual user who made use of it? However; the Ethical Challenges in Artificial Intelligence become greater when an automated system has caused financial harm, discrimination, or even bodily injury. You cannot simply shift responsibility to an AI algorithm for its mistakes. If you are implementing AI in your company then those who are implementing that AI will bear the ultimate liability. Therefore, establishing a strong governance structure for your AI deployment will be critically important before any of these types of problems occur.

The Effects of Job Loss and the Economy on Society

Job loss due to artificial intelligence (AI) has created significant public discourse about the ethics and impact this technology has had on society. The introduction of AI into customer service, manufacturing, marketing, and even certain professional service aspects are causing changes of current job roles. The rate of adoption of AI in many areas has been unprecedented and is fundamentally different from previous changes to the labour force

through various types of other technologies. To be a responsible and ethical employer in the use of this technology you must weigh the economic pros and cons of introducing AI

through various types of other technologies. To be a responsible and ethical employer in the use of this technology you must weigh the economic pros and cons of introducing AI

compared to retraining existing employees or providing a way to find new employment instead of just terminating them.

Misinformation and Deepfakes

There are many ethical challenges in artificial intelligence. One of the fastest-growing examples is misinformation. AI-generated content such as text, audio, and video can be produced with a quality that makes it difficult for people to distinguish from authentic content. Misinformation is often used to harm people’s reputations and influence public perception through deepfake video or AI-generated fake news. The ethical implications surrounding artificial intelligence in this context are significant, as the technology has capabilities that will undermine large-scale trust. For businesses, your brand may also be target or impersonated by others. As a result, monitoring and developing response plans for digital material is becoming increasingly necessary.

Autonomous Decision-Making and Safety Risks

The use of Autonomously-Decided Systems is one of the most Important and Risky Ethical Issues. There are many types of Autonomous Systems currently available including, but not limited to, Self-Driving Cars and Robots that use automatic detection to diagnose patients. As Autonomously-Decided Systems take on a larger role in everyday life, it will be critical to have adequate oversight by individuals and rigorous Testing in order to reduce Risk of Safety and Health related events; therefore, Human Oversight and Failure Prevention Measures (i.e. backup systems) must not be Optional as AI continues to develop.

Why These Ethical Challenges Matter More Than Ever

Why These Ethical Challenges Matter More Than Ever

Ethical problems related to artificial intelligence are not theoretical issues or simply limited to academic study. They affect businesses, governments and individuals directly. AI is used in hiring practices, approving finances, giving health care recommendations and determining what information is available to people every day. The risks associated with ignoring the ethical concerns surrounding AI are significant and will continue to grow as long as we do not demonstrate an increased level of ethical consciousness. In addition to protecting end users of AI solutions, developing ethical consciousness also is critical to building your

long-term credibility.

How to Navigate the Ethical Challenges in Artificial Intelligence

Being intentional is paramount when addressing Ethics in Artificial Intelligence. Begin with evaluating your data for bias, creating transparency in your processes, and establishing accountability before you deploy. Invest in Security against Cyber attacks. Microsoft is committed to your workforce adapting through change. Most of all, include Ethics within your AI strategy as a core component and not merely an ancillary discussion. Ethical Challenges in Artificial Intelligence are addressable with proactive behaviour rather than reactive.

Conclusion

Conclusion

There are vast opportunities which artificially intelligent (AI) technologies create; however, there are also risk/great responsibility associated with AI technologies. As AI technologies develop, so too will the ethical challenges in the use of these technologies. This reaffirms that organisations which will have the most success from AI will be the one’s who can appropriately apply AI and act with due diligence by advancing with a conscious awareness of risk and implementing sound ethics. If you are planning to be at the leading edge of developing sustainable innovation for future generations through artificial intelligence, you must be conscious today of these ethical challenges associated with artificial intelligence.

Why These Ethical Challenges Matter More Than Ever

Why These Ethical Challenges Matter More Than Ever Conclusion

Conclusion