Big Mart Sales Prediction: 9 Powerful Steps to Build a Successful Data Science Project

Big Mart Sales Prediction: A Step-by-Step Data Science Project

In the modern retail industry, data-driven decision making plays a critical role in improving sales and optimizing inventory. One of the most popular beginner machine learning projects is Big Mart Sales Prediction, where the goal is to predict the sales of products across different stores using historical data.

This project is widely used in data science learning because it introduces many important concepts such as data preprocessing, exploratory data analysis (EDA), feature engineering, regression modeling, and evaluation metrics.

The dataset used for this project is available on Kaggle and contains information about products, outlets, and historical sales values. By analyzing these variables, we can build a predictive model that estimates the sales of products in different outlets.

In this blog article, we will walk through the complete data science pipeline for Big Mart Sales Prediction, including dataset exploration, cleaning, feature engineering, model training, and evaluation.

Understanding the Big Mart Sales Prediction Problem

The primary goal of Big Mart Sales Prediction is to forecast the sales of individual products in various Big Mart stores based on their characteristics and outlet attributes.

Retail companies often face challenges such as:

- Overstocking or understocking products

- Unpredictable sales patterns

- Inventory mismanagement

- Inefficient pricing strategies

Machine learning models can help solve these problems by predicting future sales based on historical trends.

By building a predictive model, businesses can make better decisions related to inventory planning, marketing strategies, and product placement.

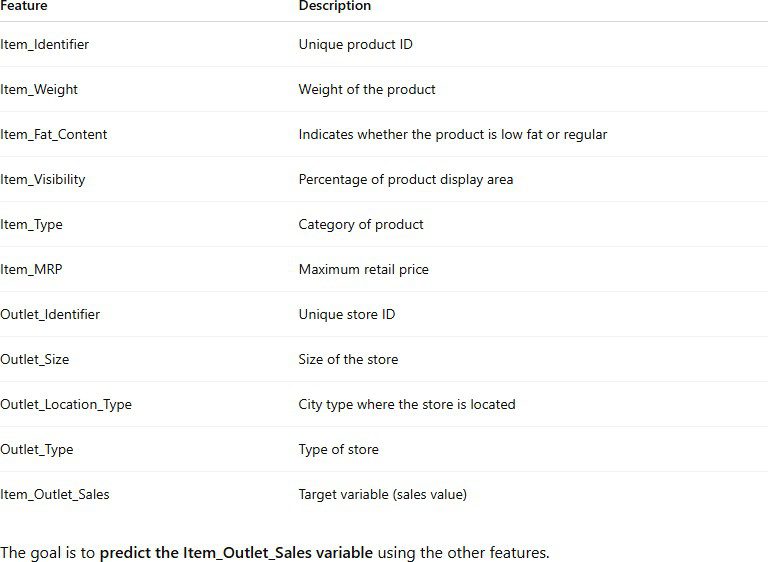

Dataset Overview

The dataset used in this project contains sales records for 1559 products across 10 different Big Mart stores.

It includes both product-level information and store-level attributes.

Key Variables in the Dataset

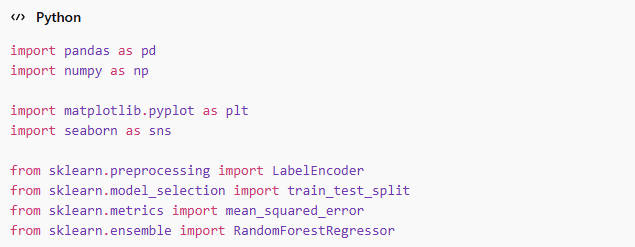

Step 1: Import Required Libraries

The first step in the Big Mart Sales Prediction project is importing necessary Python libraries for data manipulation, visualization, and machine learning.

These libraries are essential for performing data analysis and building predictive models.

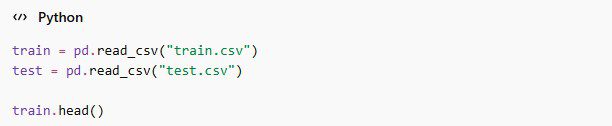

Step 2: Loading the Dataset

After importing libraries, the next step is loading the dataset into a pandas dataframe.

The head() function displays the first few rows of the dataset so we can understand its structure.

We can also check the dataset dimensions.

Example output:

![]()

This means the dataset contains 8523 rows and 12 columns.

Step 3: Exploratory Data Analysis (EDA)

Exploratory Data Analysis helps us understand patterns and relationships in the dataset.

This step identifies missing values in different columns. Common missing values appear in:

- Item_Weight

- Outlet_Size

Sales Distribution Visualization

This visualization helps us understand how sales values are distributed.

Relationship Between MRP and Sales

Higher MRP products often have higher sales values.

Step 4: Data Cleaning

Real-world datasets usually contain inconsistencies and missing values. Cleaning the data improves model performance.

Handling Missing Item Weight

We replace missing weights with the mean value.

Handling Missing Outlet Size

Categorical missing values are replaced using the mode.

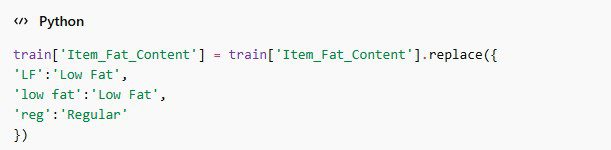

Fixing Item Fat Content

The dataset contains inconsistent labels such as:

- LF

- low fat

- reg

These can be standardized.

Step 5: Feature Engineering

Feature engineering helps improve model accuracy by creating new variables or transforming existing ones.

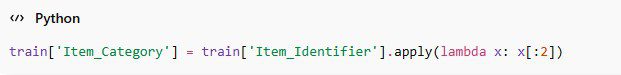

Extracting Product Category

We can extract product categories from the item identifier.

For example:

- FD = Food

- DR = Drinks

- NC = Non-Consumable

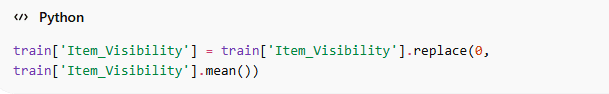

Handling Zero Visibility

Some items have zero visibility values, which is unrealistic.

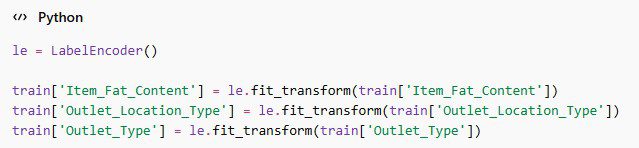

Step 6: Encoding Categorical Variables

Machine learning models require numerical input, so categorical variables must be converted into numbers.

Encoding allows the model to process categorical data effectively.

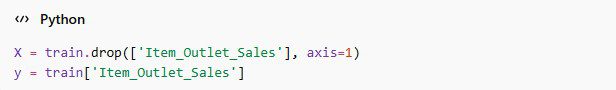

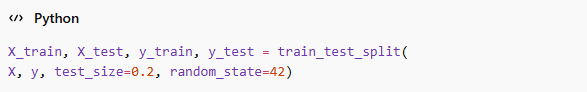

Step 7: Preparing Training and Testing Data

Now we separate input features and the target variable.

Next, we split the dataset into training and testing sets.

Typically, 80% of the data is used for training and 20% for testing.

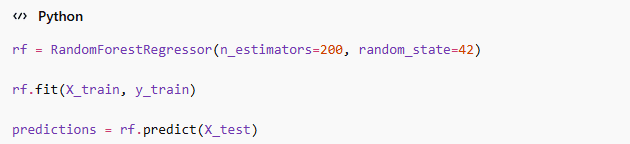

Step 8: Building the Machine Learning Model

Now we train a machine learning model to perform Big Mart Sales Prediction.

Random Forest Regressor

Random Forest is a powerful ensemble learning algorithm.

predictions = rf.predict(X_test)

Random Forest combines multiple decision trees to improve prediction accuracy.

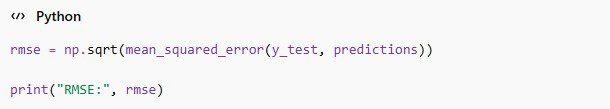

Step 9: Evaluating the Model

Model evaluation helps us determine how well the model performs on unseen data.

The most common metric for regression problems is Root Mean Square Error (RMSE).

Lower RMSE values indicate better model performance.

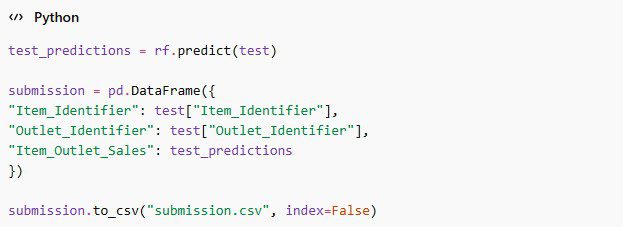

Step 10: Predicting Sales on Test Dataset

Finally, we use the trained model to predict sales for the test dataset.

This file can be uploaded to Kaggle for evaluation.

Real-World Applications of Big Mart Sales Prediction

The Big Mart Sales Prediction model has many practical uses in the retail industry.

Inventory Management

Retailers can predict product demand and maintain optimal stock levels.

Store Performance Analysis

Companies can identify which outlets perform better.

Marketing Strategy

Sales predictions help businesses design targeted marketing campaigns.

Pricing Optimization

Retailers can adjust pricing strategies to maximize revenue.

Challenges in Sales Prediction

Despite its benefits, sales prediction projects face several challenges:

- Missing data in the dataset

- High number of categorical variables

- Feature engineering complexity

- Model overfitting

Proper preprocessing and model selection help overcome these challenges.

Frequently Asked Questions (FAQs)

What is Big Mart Sales Prediction?

Big Mart Sales Prediction is a machine learning project that predicts product sales across different outlets using historical retail data. The goal is to build a regression model that can forecast the sales of individual products based on their specific characteristics (like weight and price) and store attributes (like location and size).

By analyzing these variables, companies can:

Forecast Revenue: Estimate future income based on historical trends.

Identify Patterns: Understand which products perform best in which types of cities or stores.

Reduce Losses: Avoid inventory mismanagement by predicting where demand will be high or low

Which dataset is used for this project?

The dataset used for the Big Mart Sales Prediction project is a classic retail dataset sourced from Kaggle. It contains sales records for 1,559 products across 10 different outlets. The data is divided into product-level information and store-level attributes, allowing for a comprehensive multi-dimensional analysis.

Key Details of the Dataset:

Total Records: 8,523 rows of historical sales data.

Features: It includes 12 key variables such as Item Weight, Item MRP, Outlet Size, and Outlet Type.

Target Variable: The primary goal is to predict the

Item_Outlet_Salescolumn.Complexity: The dataset is known for its missing values (in

Item_WeightandOutlet_Size) and inconsistent categorical labels, making it perfect for practicing data cleaning.

Which algorithms work best for this project?

Common algorithms include:

- Linear Regression

- Decision Trees

- Random Forest

- Gradient Boosting

- XGBoost

Why is feature engineering important in this project?

Feature engineering is the process of using domain knowledge to create new variables that help machine learning algorithms perform better. In the Big Mart Sales project, raw data alone may not capture all the patterns. Feature engineering is crucial because:

Improved Accuracy: It helps the model understand complex relationships, such as how the first two letters of an

Item_Identifierrepresent broad categories like Food (FD) or Drinks (DR).Noise Reduction: By transforming inconsistent data (like fixing “LF” to “Low Fat”), we remove confusion for the model, leading to more stable predictions.

Handling Unrealistic Data: Some items have a visibility of 0, which is practically impossible for a product on a shelf. Replacing these with the mean visibility ensures the model doesn’t learn incorrect patterns.

Numerical Transformation: Since machine learning models only understand numbers, encoding categorical variables into a numerical format is a vital part of the engineering process.

What evaluation metric is used for Big Mart Sales Prediction?

The most commonly used evaluation metric for the Big Mart Sales Prediction project is Root Mean Square Error (RMSE). Since this is a regression problem where we predict a continuous numerical value (sales), RMSE helps us understand the accuracy of our model.

Why do we use RMSE?

Error Measurement: RMSE measures the average magnitude of the error by calculating the square root of the average of squared differences between predicted and actual values.

Sensitivity to Outliers: It gives a relatively high weight to large errors, which is useful in retail because missing a high-sales forecast can lead to significant inventory issues.

Easy Interpretation: Lower RMSE values indicate that the model’s predictions are closer to the actual sales values, representing better model performance.

Is this project suitable for beginners in data science?

Yes, the Big Mart Sales Prediction project is one of the most recommended starting points for anyone entering the field of Data Science. It is highly suitable for beginners because:

End-to-End Workflow: It covers the entire machine learning pipeline, from data exploration and cleaning to model training and evaluation.

Real-World Complexity: Unlike “clean” textbook datasets, this Kaggle dataset contains missing values and inconsistent labels, providing a realistic experience of data preprocessing.

Foundation for AI: Mastering this project builds the necessary foundation in predictive modeling that is required for more advanced AI systems.

High Impact: Sales forecasting is a core business problem, making this project a valuable addition to any beginner’s portfolio.

Real-World Applications of Sales Prediction

The Big Mart Sales Prediction model is not just a coding exercise; it has practical uses in the global retail industry:

Inventory Management: Retailers can predict product demand to maintain optimal stock levels and avoid overstocking.

Store Performance: Companies can identify which outlets are high-performing based on specific store attributes.

Strategic Marketing: Sales forecasts help businesses design targeted marketing and pricing strategies to maximize revenue.

🎓 Conclusion: Your First Step into Machine Learning

The Big Mart Sales Prediction project is a great hands-on exercise for learning machine learning and data analysis. This project demonstrates the complete data science pipeline—from data exploration and preprocessing to feature engineering and model training. By working with real-world retail data, data scientists can develop models that predict product sales and generate valuable business insights.

For beginners in data science, this project provides a strong foundation in machine learning, data preprocessing, and predictive modeling. While challenges like missing data and feature engineering complexity exist, mastering this workflow is essential for success in the field. With additional improvements such as hyperparameter tuning and ensemble models like XGBoost, the predictive accuracy can be further enhanced.

Professional Resources & Support

Kaggle Achievement: This project is a Bronze Medal Winner 🥉. Explore the interactive notebook here: Kaggle: Big Mart Sales Prediction.

YouTube Channel: Subscribe to AI Learner Tech for a step-by-step video breakdown of this project 🎥.

GitHub Organization: Access the source code and datasets on our GitHub Repo 📂.

Support: For collaborations or questions, reach out to us at contact@ailearner.tech 📧.

Author: AI Learner Tech

AI Learner Tech is a premier research and educational hub dedicated to mastering Artificial Intelligence, Machine Learning, and Computer Vision. We bridge the gap between complex academic theories and real-world industrial applications. Join our community to access high-quality tutorials, open-source projects, and expert insights. Website: ailearner.tech